AdA Project

Research group “Audio-visual rhetorics of affect” - FU Berlin + HPI Potsdam

Film-Analytical Annotations

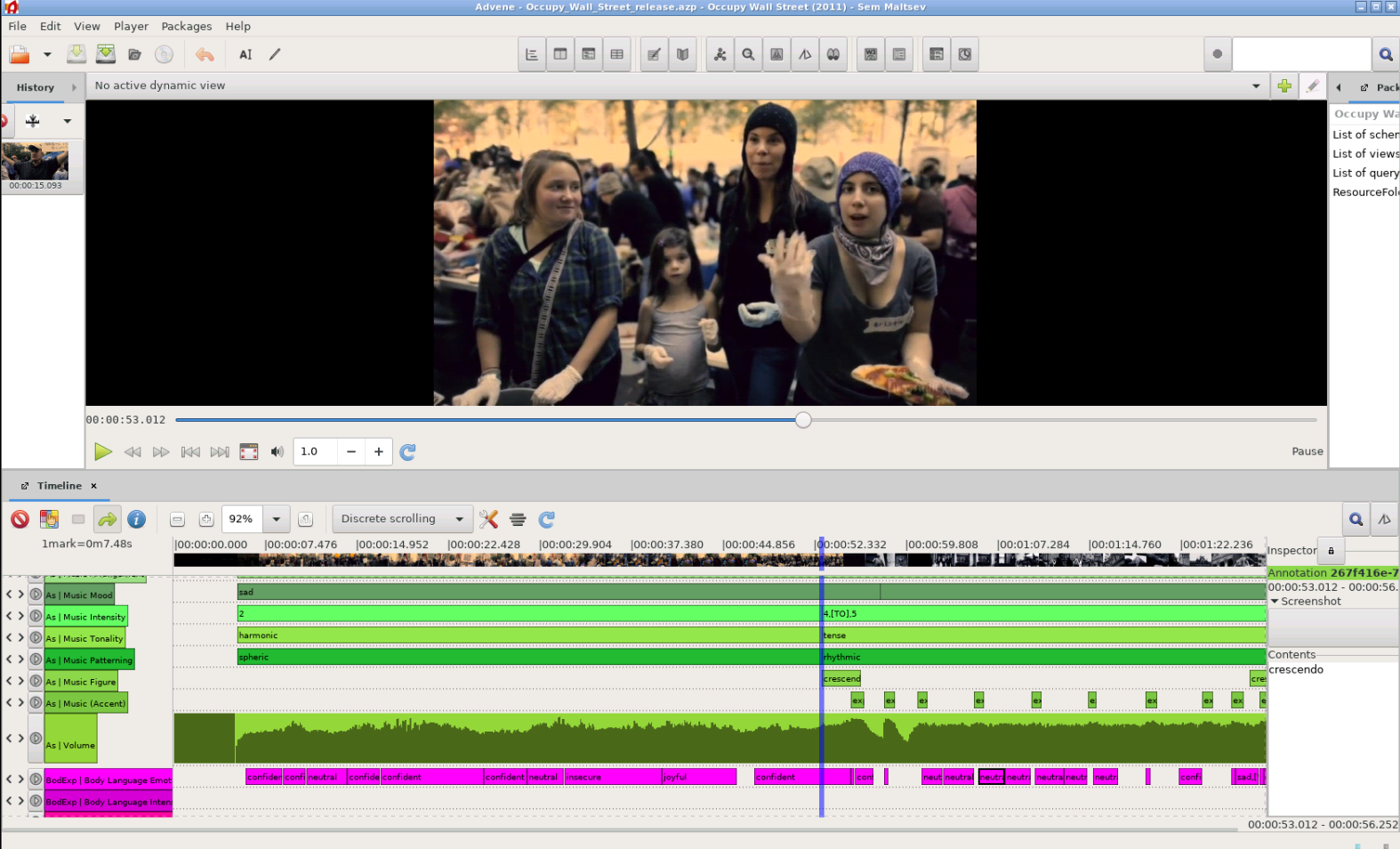

Image Credit: Screenshot of Advene annotation software showing the Occupy Wall Street video.

Image Credit: Screenshot of Advene annotation software showing the Occupy Wall Street video.

Throughout the project, the FU Berlin project team created a very high quality data set of manual film-analytical annotations for a set of feature films, documentaries, and television news. These valuable annotations are published here as Linked Open Data under the CC BY-SA 3.0 license to make the data available to other film scientists as well as researchers from other domains.

Annotation Creation

The annotations were created based on a film scholar’s analytical framework (eMAEX method) to study the aesthetics of audio-visual images. The annotation work followed a strict annotation routine to precisely describe the films of the corpus under different levels of description (see ontology).

The annotation process is carried out with Advene, a free software toolkit for annotating audio-visual documents. We worked closely with Olivier Aubert, one of the authors of Advene, on the one hand to improve the user interface for faster annotation work, and on the other hand to enable the import of the AdA filmontology and the export of W3C compliant video annotations as RDF data.

To create annotations that conform to the AdA filmontology, you can use the Advene template package that we provide in our GitHub repository.

Structure

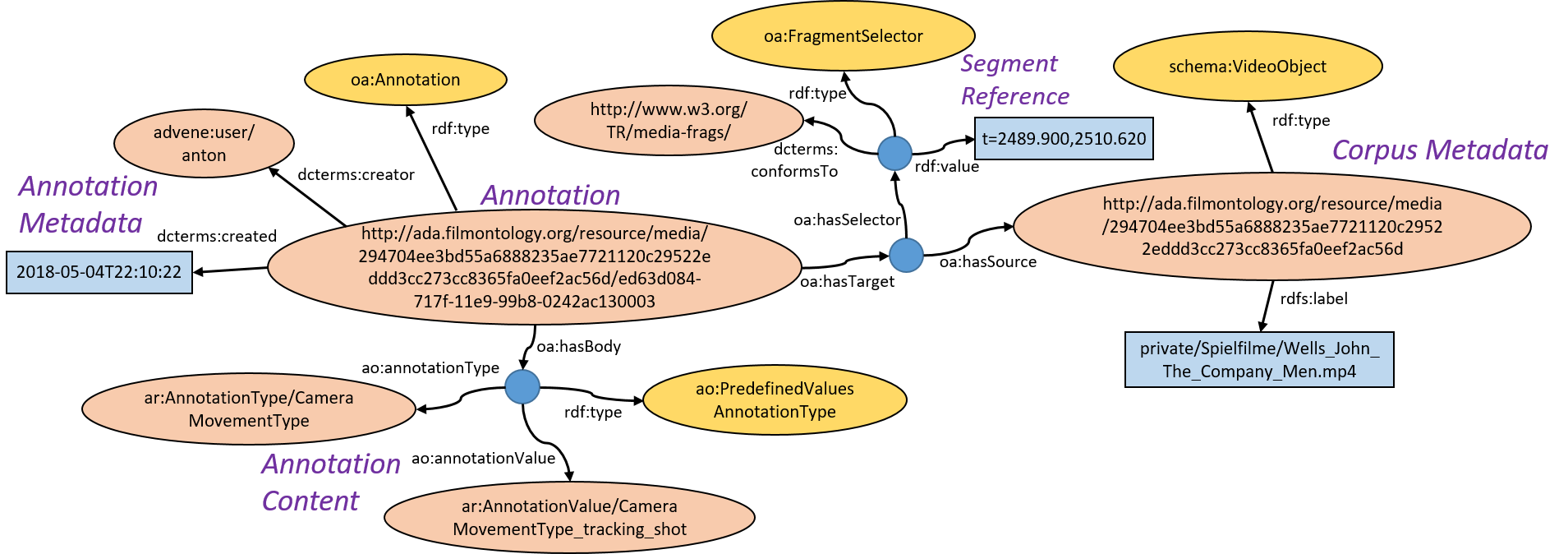

The datasets created in the AdA project consist of thousands of annotations that use timecode-based references to the original video material. Each annotation refers to a fragment of a movie using start and end timecodes to which the annotation applies. The actual content of the annotation refers to the film-analytical concept described by the annotator as defined by the annotation types and values in the AdA filmontology. Each annotation also contains metadata about the author and the creation date.

For example, the following information is available to characterize camera movement in minute 41 of the feature film “The Company Men”:

| Annotation ID | ed63d084-717f-11e9-99b8-0242ac130003 |

| Begin timecode | 00:41:29.900 |

| End timecode | 00:41:50.620 |

| Annotation Type | Camera Movement Type |

| Annotation Value | tracking shot |

| Author | anton |

| Date | 2018-05-04 22:10:22 |

Encoding

Our annotations are encoded using the latest W3C Web Annotation Data Model. An annotation in this model is a relationship between resources, which normally consists of a body (the description) and a target (an external resource, e.g., a movie, an MP3 file, a PDF document). Since we have to refer to parts of external resources (video fragments), we use the standard W3C Media Fragments URI to encode temporal references in URIs. Using the example above, the annotation type and value are encoded in the annotation body and the video segment is referenced with the timecode interval t=2489.900,2510.620.

Image: A semantic video annotation as RDF graph.

Image: A semantic video annotation as RDF graph.

Online Access

All annotations are published online in our triplestore. The annotations can be accessed through their URIs. Here are some examples:

| The Company Men | Annotation with one predefined value | Timecode 00:41:29-00:41:50 | Camera Movement Type: tracking shot |

| The Company Men | Annotation with evolving values | Timecode 00:41:29-00:41:50 | Camera Angle Canted: level [TO] tilt right |

| Inside Job | Annotation with a text value | Timecode 00:01:05-00:01:13 | Dialogue transcript |

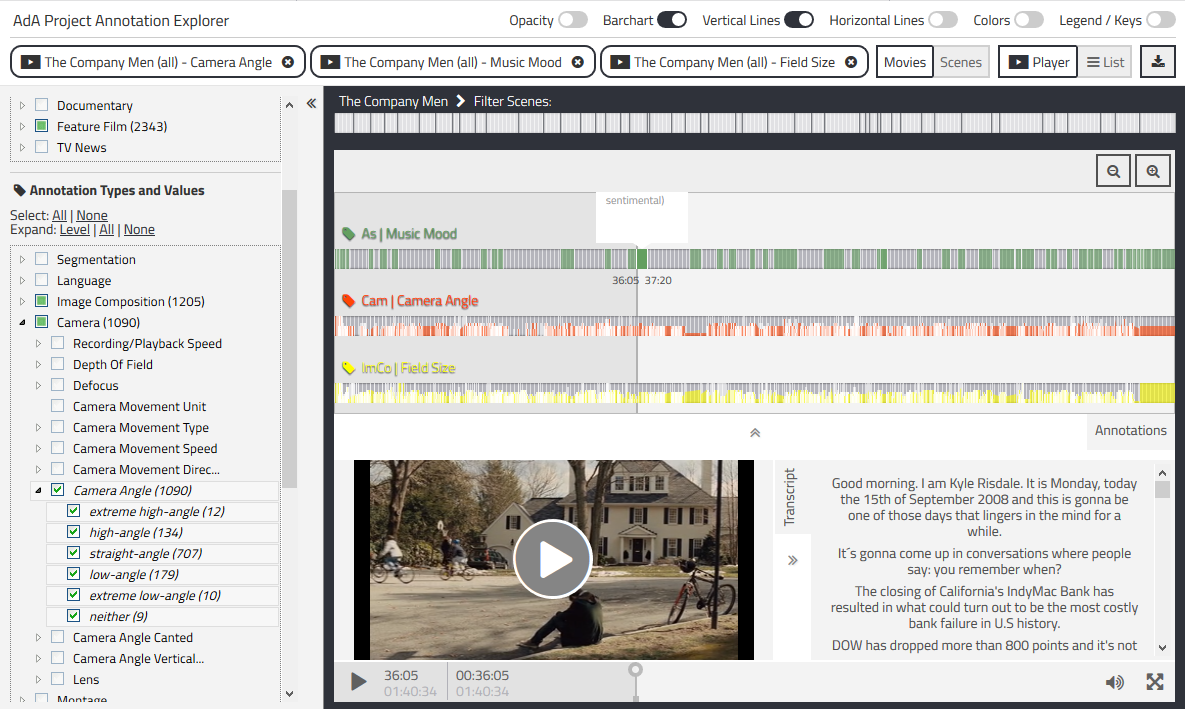

We also developed the Annotation Explorer. It’s a web-based application for querying, analyzing and visualizing semantic video annotations that provides access to over 90,000 annotations in a consistent way. It is also possible to query the raw RDF data using our public SPARQL endpoint.

Image: Screenshot of our Annotation Explorer web app with queries of annotations from the film "The Company Men".

Image: Screenshot of our Annotation Explorer web app with queries of annotations from the film "The Company Men".

Download

All annotation datasets are available for download in our GitHub repository as either RDF export in Turtle format (ttl), or .azp packages that can be visualized using Advene. Currently, we provide annotations for the following movies:

| Movie | # Annotations |

|---|---|

| Capitalism: A Love Story | 19917 |

| Inside Job | 14422 |

| Margin Call | 546 |

| Occupy Wall Street | 581 |

| Tagesschau 2008-07-15 | 442 |

| Tagesschau 2008-09-08 | 345 |

| Tagesschau 2008-09-12 | 495 |

| Tagesschau 2008-09-13 | 414 |

| Tagesschau 2008-09-15 | 565 |

| Tagesschau 2008-09-16 | 829 |

| Tagesschau 2008-09-17 | 532 |

| Tagesschau 2008-09-18 | 487 |

| Tagesschau 2008-09-19 | 805 |

| Tagesschau 2008-09-20 | 314 |

| Tagesschau 2008-09-21 | 512 |

| Tagesschau 2008-09-22 | 534 |

| Tagesschau 2008-09-23 | 520 |

| Tagesschau 2008-09-24 | 415 |

| Tagesschau 2008-09-25 | 608 |

| Tagesschau 2008-09-26 | 470 |

| Tagesschau 2008-09-29 | 439 |

| Tagesschau 2008-09-30 | 684 |

| The Big Short | 22892 |

| The Company Men | 24285 |

| Total | 92053 |